Mobile App Development, SEO, Social Media, Uncategorized, Web Development, Website Design

How to Detect (and Deflect) Negative SEO Attacks

- By Brett Belau

24 Aug

Has your organic search traffic plummeted in recent months, and you can’t figure out why?

While it’s very unlikely these days, negative SEO could be the culprit.

But before we talk about detecting, deflecting, and fighting negative SEO, let’s make sure we understand what it is…

Negative SEO is when a competitor uses black-hat tactics to attempt to sabotage the rankings of a competing website or web page. Not only is this practice unethical, but also sometimes illegal.

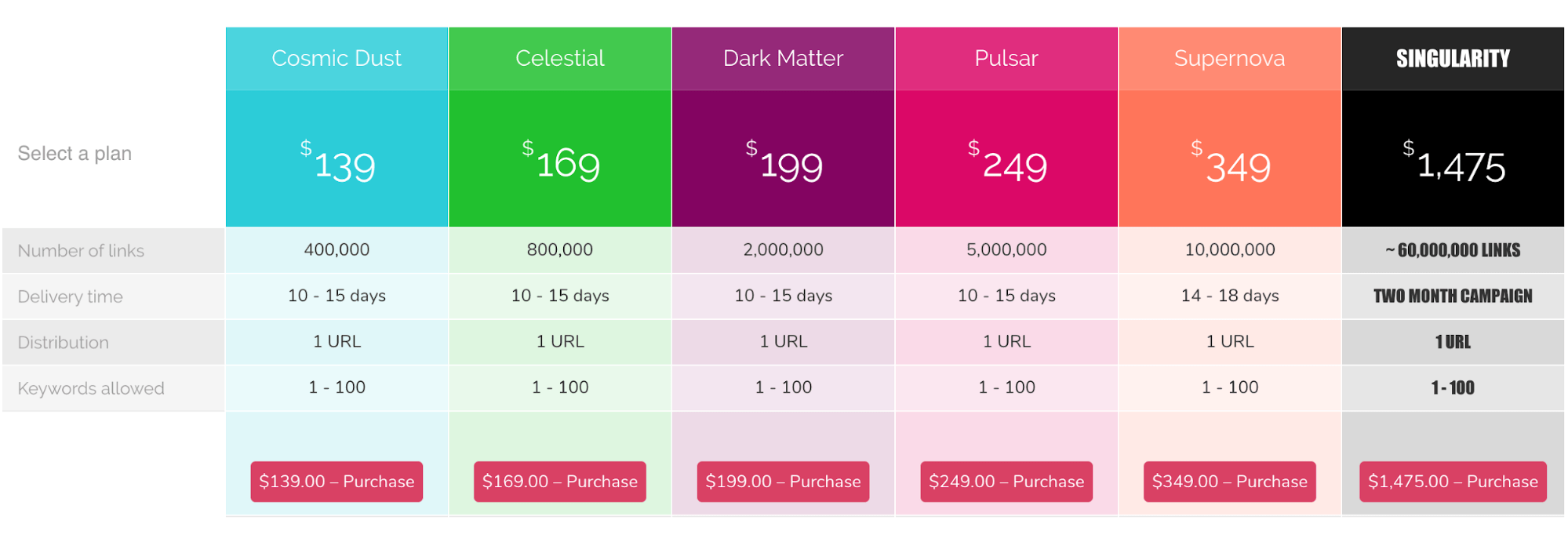

Building low-quality links at scale is perhaps the most common and unsophisticated type of negative SEO because it’s so easy and cheap to do. There are plenty of websites selling thousands of spammy backlinks for next to nothing.

Here’s a site offering 60 million backlinks for $1,475:

That’s around 40,000 links per dollar!

Other common types of negative SEO include:

- Submitting fake link removal requests

- Leaving fake negative reviews

- Hacking sites and other forms of cyber attacks

Google’s official stance on the matter as of 2021 is no, and it’s been that way for many years.

John Mueller, Search Advocate at Google, basically calls negative SEO a meme these days:

I don’t think the meme of negative SEO will ever go away. It’s tempting to assume someone else is causing issues, and, yes, sometimes people have a lot of money, time, bad ideas. Time will tell, and I’m pretty confident it’ll be fine.

— 🍌 John 🍌 (@JohnMu) March 1, 2021

Gary Illyes, another Google’s representative, has made similar statements:

[I’ve] looked at hundreds of supposed cases of negative SEO, but none have actually been the real reason a website was hurt. […] While it’s easier to blame negative SEO, typically the culprit of a traffic drop is something else you don’t know about–perhaps an algorithm update or an issue with their website.

But many SEO experts will tell you that taking Google’s words at face value isn’t always the best idea. So here’s what we think:

Negative SEO can still work, but it’s much less of a problem than it used to be.

I realize that’s a bold statement, so let me go through why we believe this.

1. Google now devalues link spam instead of demoting sites

Penguin is the part of Google’s core algorithm designed to catch link spam.

Before 2016, it worked like this:

- Penguin sees an influx of spammy links to a website

- The website might get demoted in the organic search results (i.e., rankings and traffic loss)

But then, Google released Penguin 4.0.

Now, rather than demoting entire sites, Google devalues link spam (or at least tries to).

Here’s how Gary Illyes explained the difference between devaluing and demoting:

Demoting as in adjust the rank of a site. Devalue as in “oh look, some crap coming towards this site. Let’s make sure it won’t affect its ranking.”

In short, Google tries to identify and ignore low-quality links so they don’t affect your rankings.

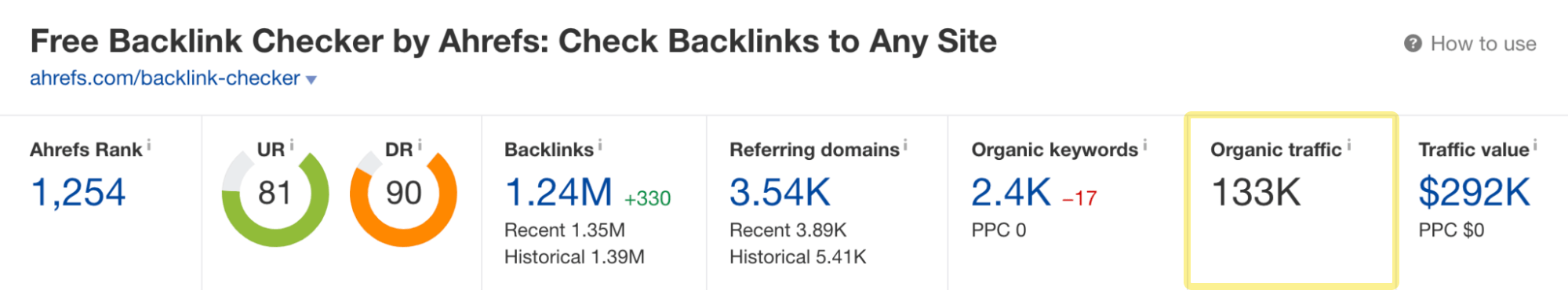

That’s why our free backlink checker gets an estimated 133,000 organic visits per month…

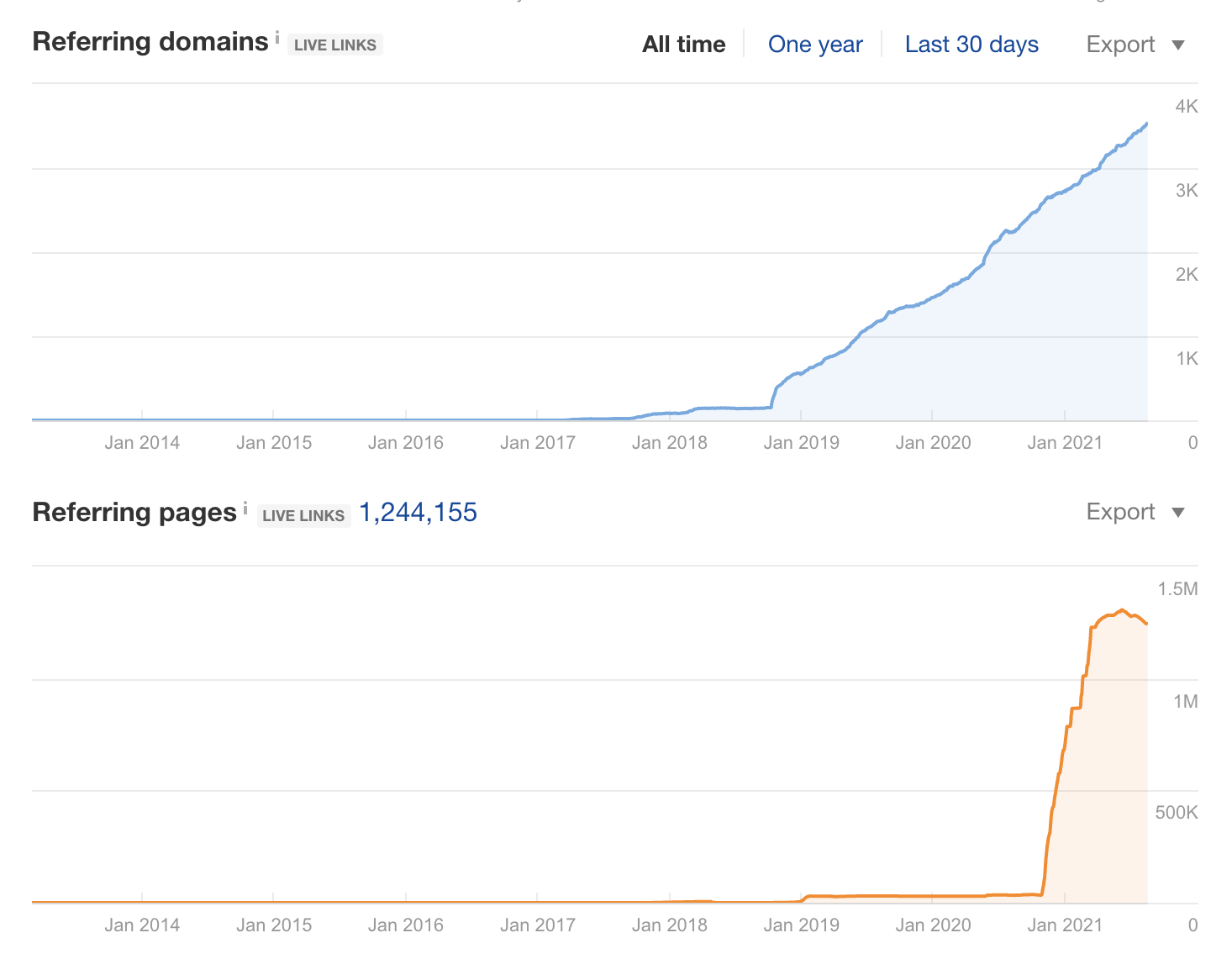

… despite someone kindly linking to it from over a million spammy pages:

Google is clearly doing an excellent job of ignoring that blatant negative SEO attack.

2. Penguin 4.0 is “more granular”

Penguin used to demote entire sites with link spam.

So, if you experienced a negative SEO attack on one page, Penguin would penalize your entire site and rankings would drop across the board.

But since Penguin 4.0, things don’t always work that way.

Here’s what Google said in their official announcement:

Penguin is now more granular. Penguin now devalues spam by adjusting ranking based on spam signals, rather than affecting ranking of the whole site.

Confused? Here’s Google’s “clarification” of what this means:

It means it affects finer granularity than sites. It does not mean it only affects pages.

Still confused? Here’s our best interpretation:

Penguin tries to devalue (ignore) the unsophisticated link spam associated with most negative SEO attacks. However, Penguin still seeks to penalize those who intentionally build manipulative links algorithmically. That’s the whole point of Penguin. If it sees link spam, it may decide to demote the page to which the manipulative links point, a subsection of the website, or the entire website. It depends.

In other words, the chance of a negative SEO attack being successful is lower now than in the pre-Penguin 4.0 era. Moreover, if it is successful, Google probably won’t demote your entire site—so the real-life negative effect is likely to be much less catastrophic than it once was.

3. Google’s business model relies on negative SEO not working

Negative SEO is a tactic usually employed by website owners that can’t rank on merit alone.

Instead of improving their site, they use negative SEO to shoot down the more deserving competitors that rank above them.

That’s kind of like competing with Usain Bolt in the Olympics and tying his shoelaces together.

Nobody would watch the Olympics if that were allowed. There’s no fun in watching a loser cheat their way to the top. Similarly, nobody would use Google if the top-ranking page was always spam. And if nobody uses Google, the company has no ad revenue. Their business would disintegrate.

That’s why Google introduced Penguin 4.0. It’s why it runs in real-time and aims to devalue link spam rather than demote entire websites. And it’s why Google continues to invest in efforts to thwart negative SEO.

4. Link spam isn’t the only kind of negative SEO

The three points above explain why link-based negative SEO attacks are much less of an issue than they used to be.

But not all negative SEO attacks are link-based.

Someone could hack your website and inject spammy links, post fake negative reviews online, or something much worse.

This is an important point to keep in mind.

Detecting and deflecting negative SEO isn’t about finding and disavowing links from shady websites anymore. Now it’s about keeping an eye on your entire online presence and employing positive security measures to keep the “baddies” at bay.

Below I’m going to cover how to spot and defend against these seven types of negative SEO attacks:

- Spammy link building

- Fake link removal request

- Content scraping

- False URL parameters

- Fake reviews

- Hacking your site

- DDoS attacks

Let’s start with the tactic most commonly associated with negative SEO.

1. Spammy link building

Building tons of low-quality links to a competing site is likely the most prevalent form of negative SEO—and certainly the most unsophisticated.

Whether those spammy links come from cheap Fiverr gigs, Scrapebox comment spam, or a PBN (Private Blog Network), the result is the same: a sudden influx of shady links pointing to your site.

How spam links can harm your site

There are two approaches to link spam when it comes to negative SEO, and an unscrupulous SEO may use either (or indeed both) of them.

- The volume approach: Blasting thousands upon thousands of low-quality links at your site.

- The over-optimized anchor text approach: Pointing lots of links with exact-match anchor text at a ranking page to give it an unnatural anchor text ratio.

Both approaches aim to get your site penalized—either algorithmically by Penguin or through a manual action from Google’s webspam team.

Fortunately, both of these tactics are easy to spot.

How to detect a spam link attack

Here are three methods you can use to detect spam links (that you did not build) pointing to your site.

Method 1: Find spam links in real-time

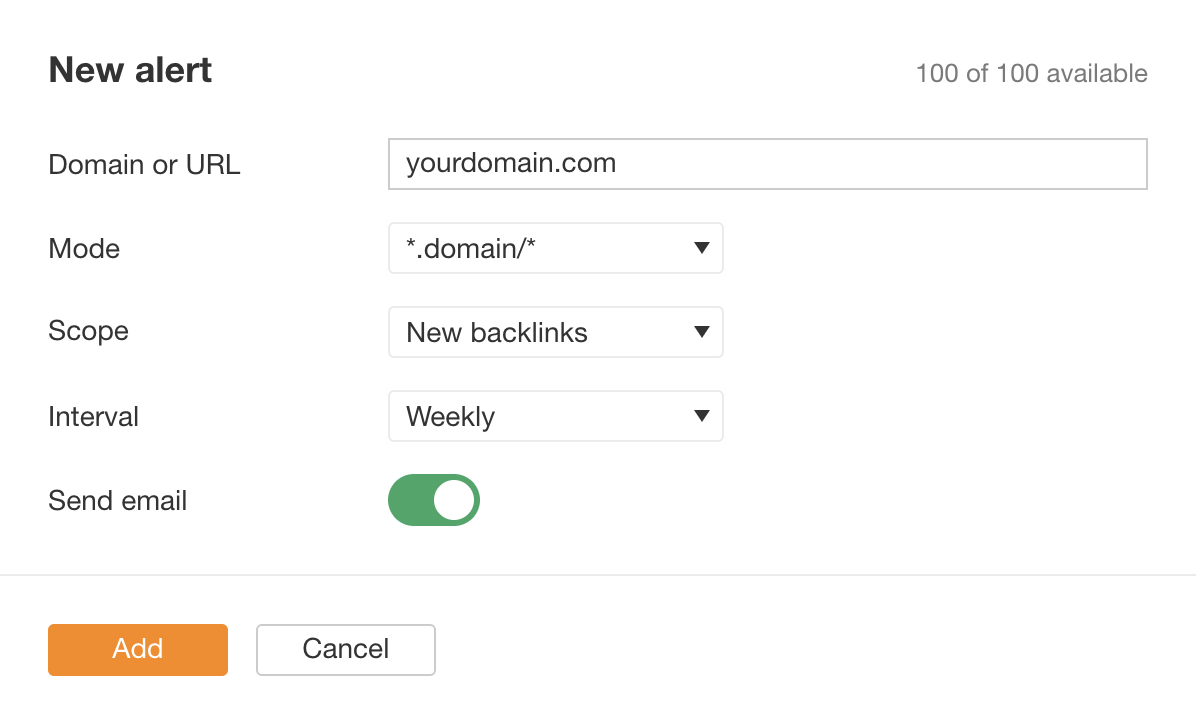

The simplest way to detect an active link spam attack is to monitor new backlinks pointing to your site.

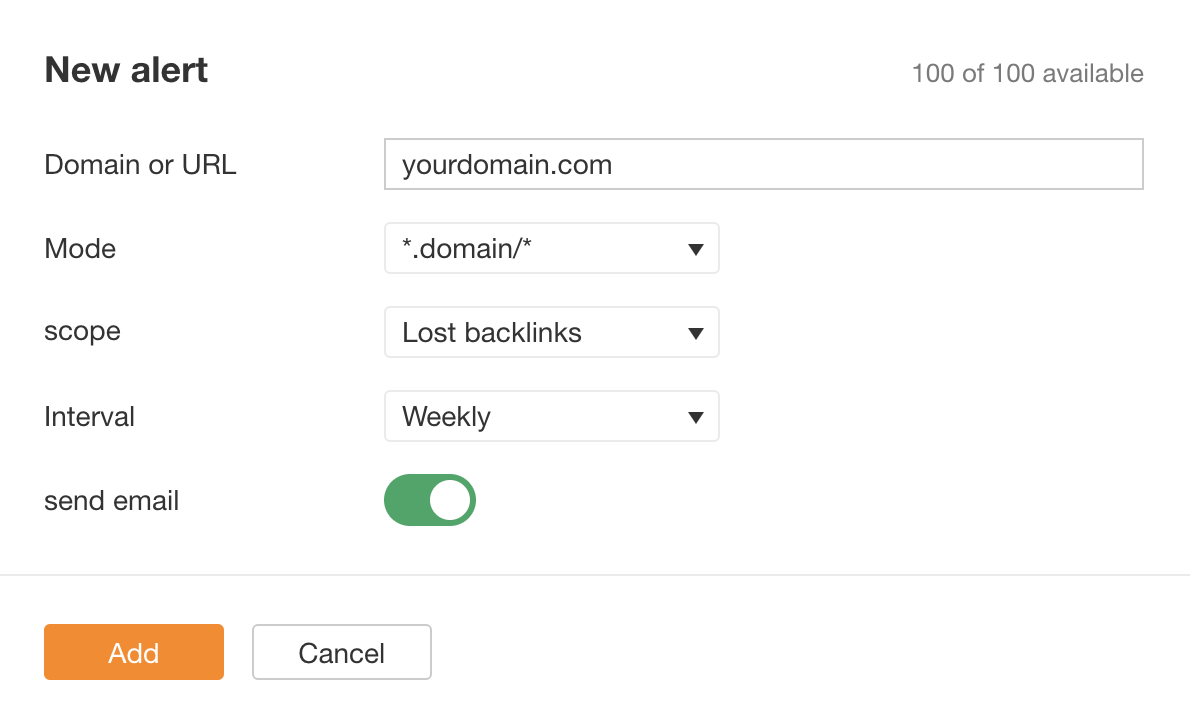

You can do that by setting up a Backlinks alert in Ahrefs’ Alerts.

Alerts > Backlinks > New alert > Enter domain > New backlinks > Set email interval > Add

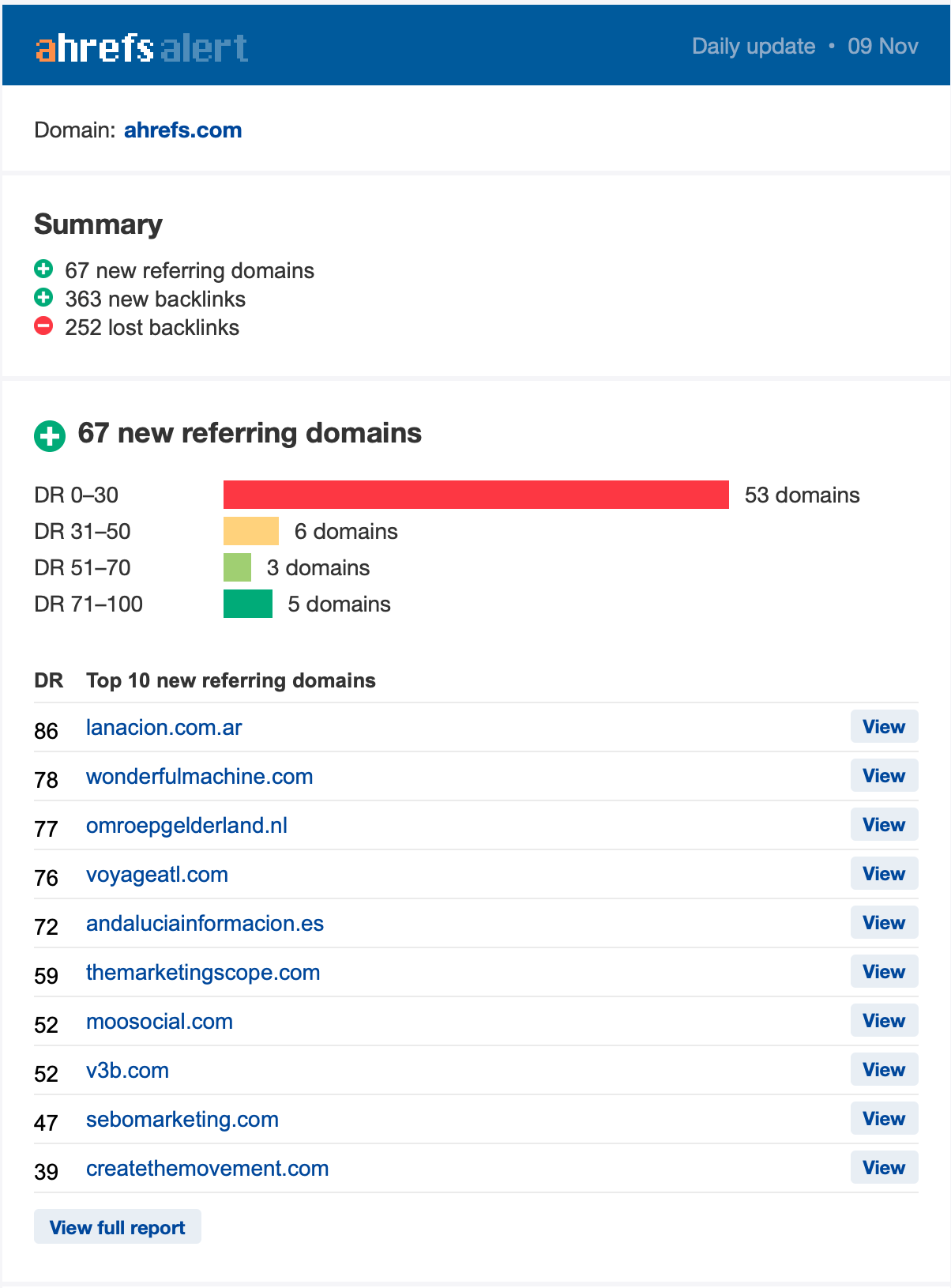

You’ll get a regular email notifying you of all new links Ahrefs has discovered pointing to your site.

The image above shows a standard daily distribution of new referring domains to ahrefs.com. Links from 0–30 DR domains will always be more prevalent. Some of them are spammy. It’s normal and nothing to worry about.

After setting up the alert and looking at the history of new referring domains, you should have an idea about your daily backlink portfolio influx. If you see an abnormally high number of new referring domains, it’s almost certainly a negative SEO attack.

Method 2: Check the referring domains and pages graphs

Use the referring domains and pages graphs in Ahrefs’ Site Explorer to quickly identify spikes in your backlink profile.

Site Explorer > Enter domain > Overview

Now, it’s important to note that a sudden increase in referring domains could be a good thing. For example, one of your posts may have gone viral, or you could have had success with an outreach campaign.

But it could also be a sign of a negative SEO attack.

Here’s how to investigate further using Ahrefs Site Explorer:

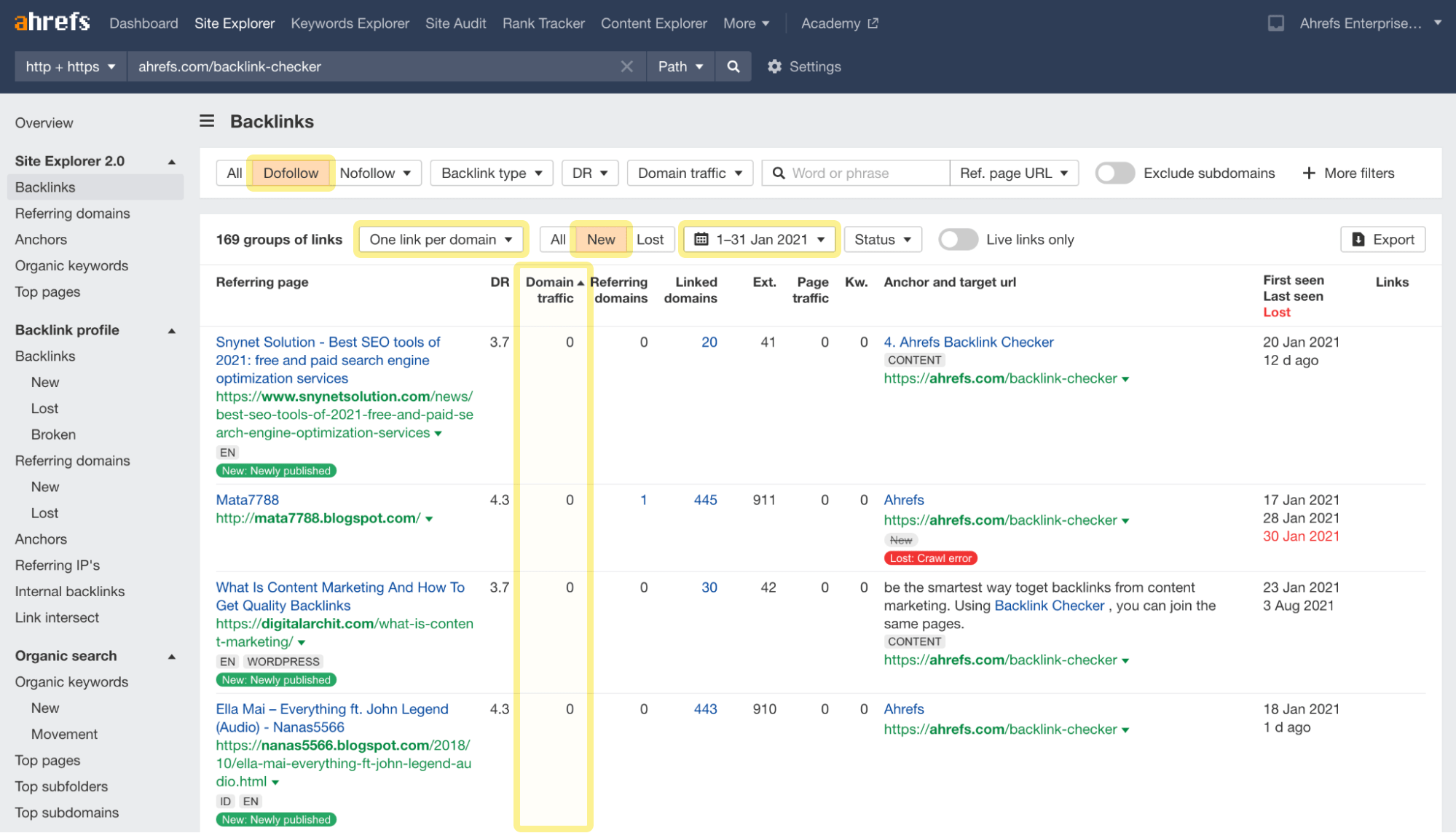

- Click the Backlinks report

- Change the mode to “One link per domain”

- Click the Dofollow filter

- Click the New backlinks filter

- Select the period when the spike occurred

- Sort the results by ascending Domain traffic

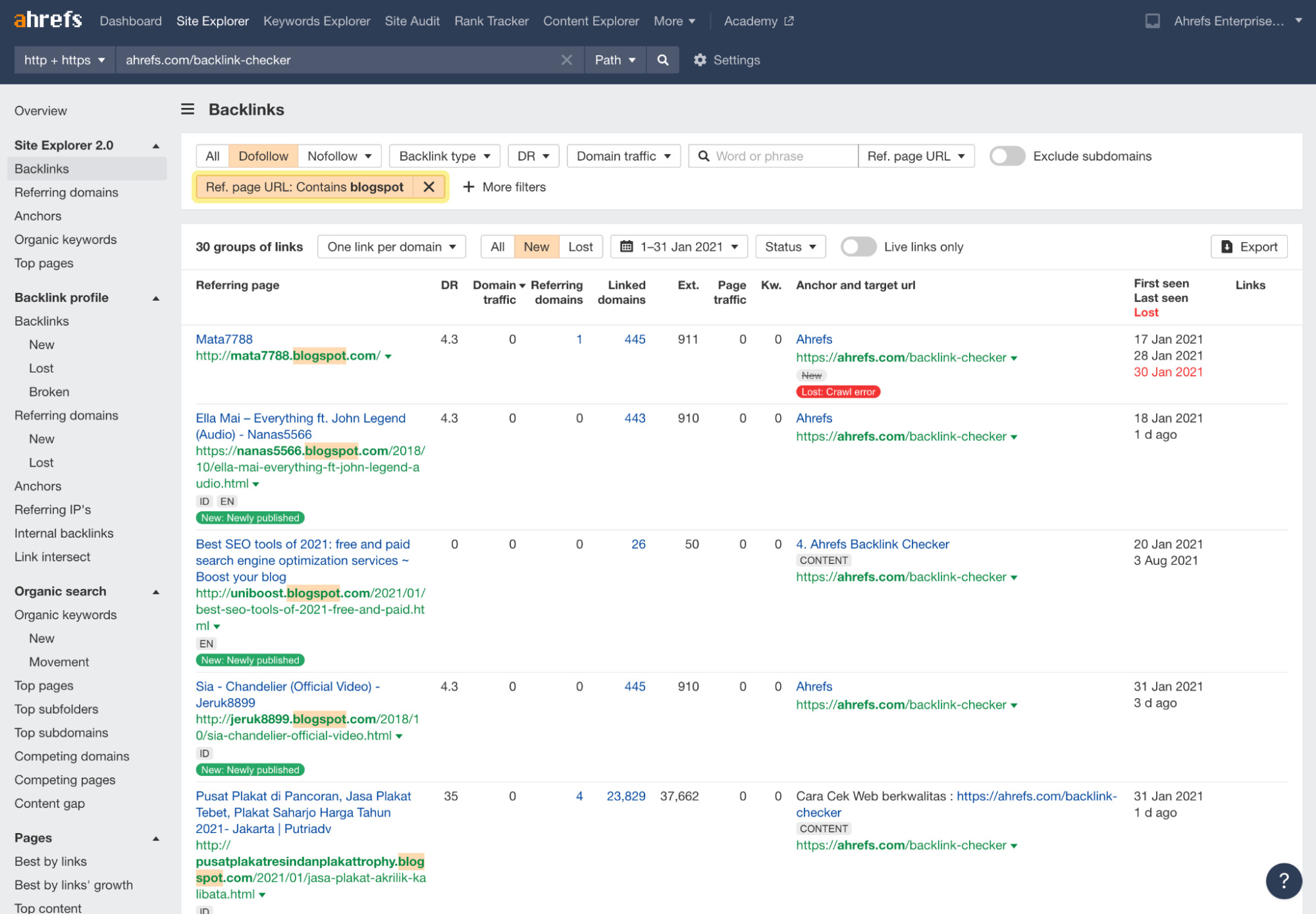

You’ll likely see some patterns in the referring pages and anchor texts. You can filter that too. In this example, I found some spam from blogspot.com:

Most link spam is unsophisticated, so you’ll quickly spot the trends if that’s the case.

However, I must warn you about clicking on fishy-looking websites and links. You’re better off not doing it because it can pose security threats.

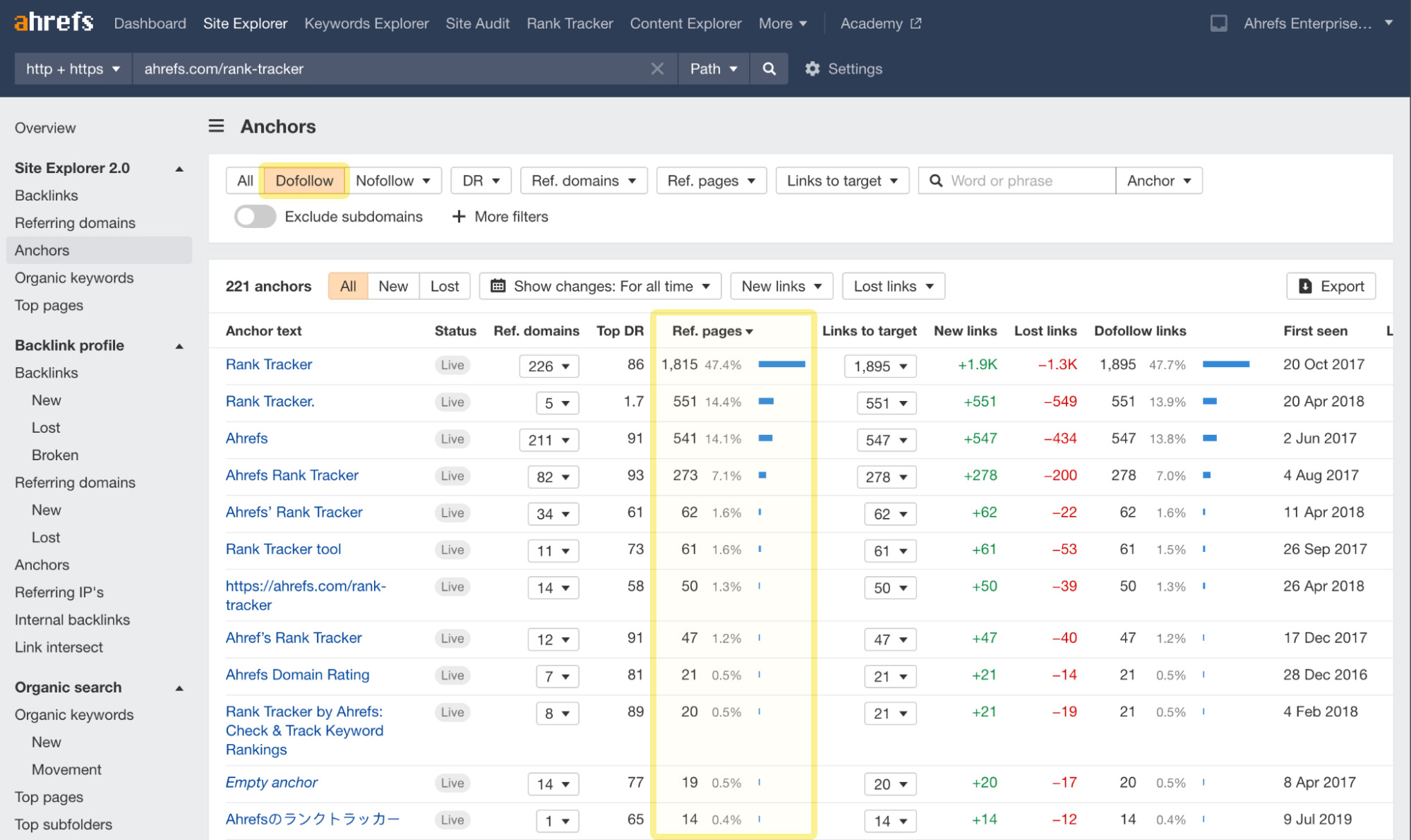

Method 3: Check the Anchors report

The first two methods are most effective for finding high-volume attacks, where someone blasts hundreds or thousands of links at your site.

But it’s also easy to spot an attempt to manipulate your anchor text ratio.

Here’s how to do it in Site Explorer:

- Click the Anchors report

- Select Dofollow links

- Look at the Ref. pages column with anchor text usage percentages

If you see an abnormally high percentage of keyword-rich anchors, it could be a sign of bad link-building practices or, indeed, a sneaky link-based negative SEO attack.

In this case, I found the following anchor text shared by multiple referring domains and pages:

Given that there’s little chance multiple legitimate sites would link to us with such a long and specific anchor, this is likely some kind of link spam. We can investigate further by clicking the caret in the Ref. domains or Links to target column to reveal the linking sites and pages.

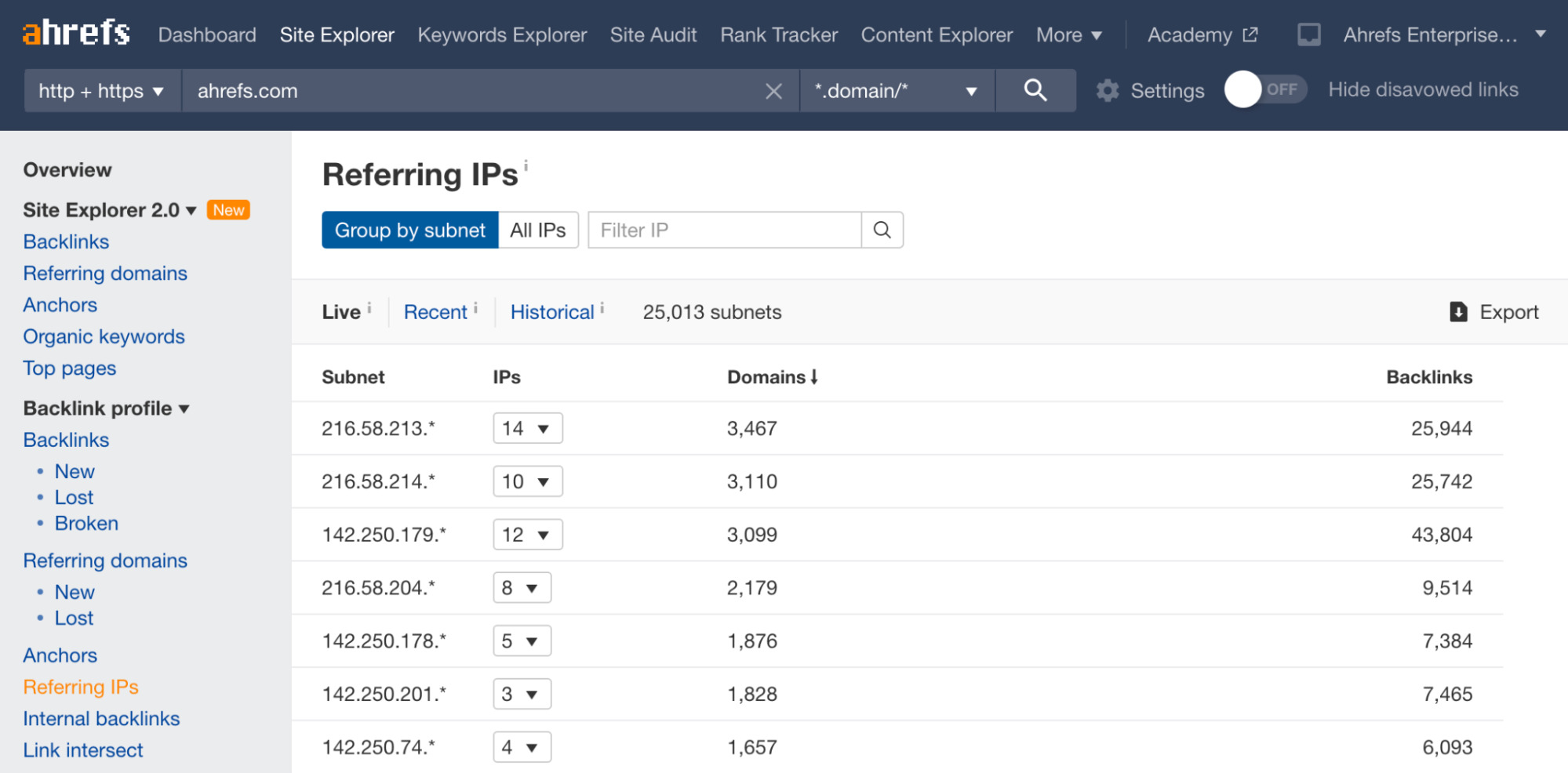

Method 4: Check the Referring IPs report

Having links from many referring domains on the same subnet IP can be another sign of a negative SEO attack.

Why? Because this often indicates that the sites are hosted in the same location.

If many sites are hosted in the same place, then chances are the same person owns them.

And if the same person owns them, then it’s probably a PBN.

To see a breakdown of this, check the Referring IPs report in Site Explorer.

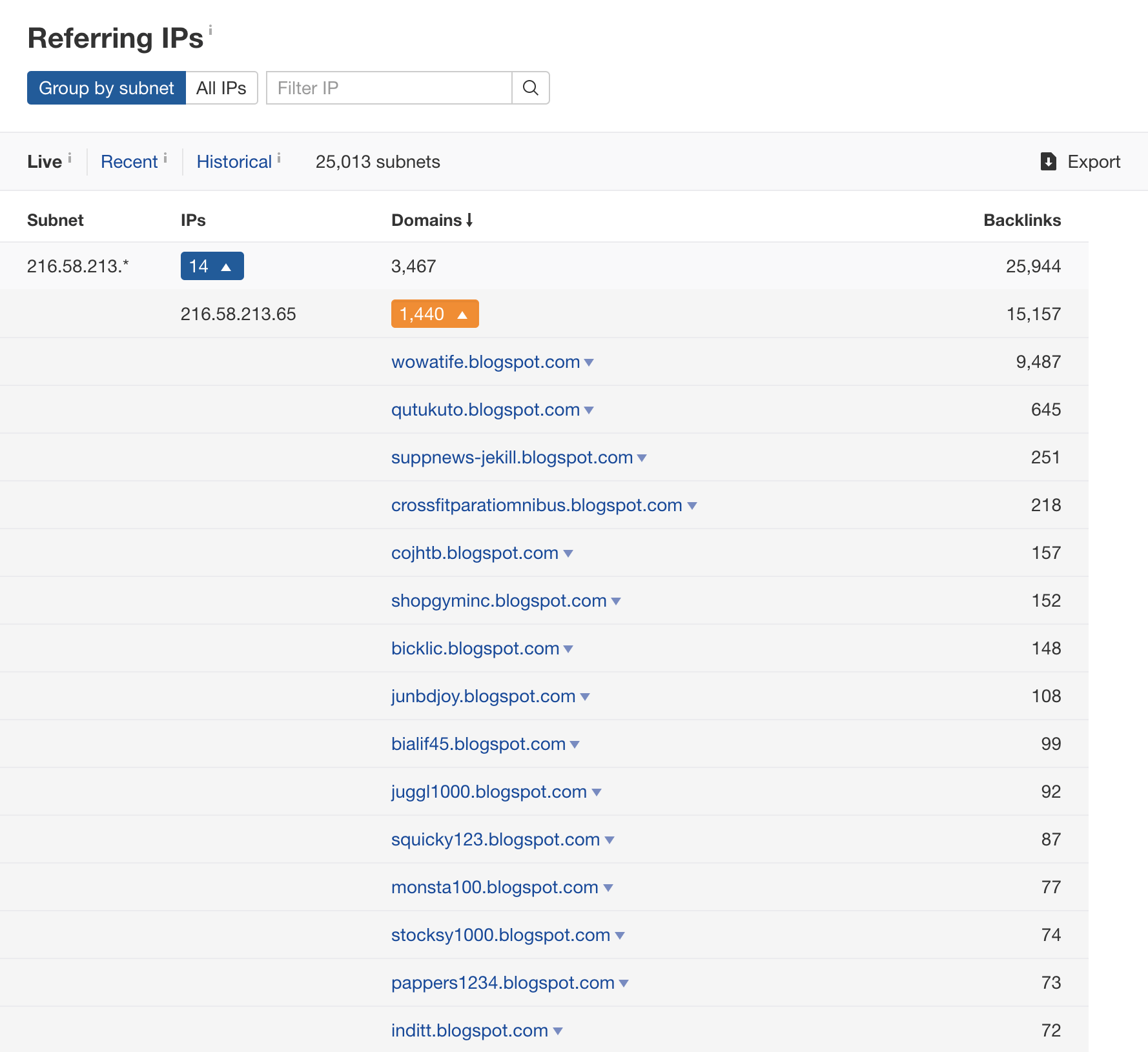

It’s important to note that having links from a few domains on the same subnet isn’t that unusual. But having hundreds or even thousands of referring domains from one subnet is fishy.

If we hit the carets and dig a little deeper, we see the same old blogspot spam:

How to fight back against a link spam attack

Getting spammy links removed is virtually impossible, so the only thing you can proactively do is disavow them.

This is where you upload a list of linking pages (or websites) to Google in a specific format, which effectively tells them, “I don’t vouch for these links—please ignore them.”

But here’s the thing:

Since the introduction of Penguin 4.0, which devalues link spam and runs in real-time, the consensus amongst SEOs is that there’s no need to disavow links unless you first experience the negative effects of them (i.e., ranking/traffic drops).

The reason being, Google is pretty good at ignoring obvious link spam, so disavowing is often just a waste of your time.

Furthermore, disavowing the wrong links can do more harm than good.

Here’s what Marie Haynes—an expert on Google Penalties—says about this:

I would say that for most sites, if you are being attacked by an onslaught of spammy links, you can just ignore them. However, I would still disavow links if any of the following is true:

- You have your own history of self-made links for SEO purposes in the past.

- You are in an incredibly competitive vertical. I believe there are tougher algorithms in place in these niches that can make negative SEO a little bit more effective.

- You see a drop in traffic that coincides with the onslaught of links and there is no other explanation for the drop.

Keep in mind that you should only disavow whole domains if you’re certain that none of the backlinks from them are legit. If you’re unsure about this whole process, consult an expert like Marie.

2. Fake link removal requests

This is a particularly sneaky form of negative SEO where unethical SEOs send emails like this to sites that link to you:

Dear Webmaster

Our client’s site X has links on your page Y.

Due to recent changes in Google’s algorithm, we no longer require these links and request that you remove them.

Thank you,

Some SEO Company

If their motive isn’t clear from the email alone, they’re trying to get sites to remove your best links.

How fake link requests can harm your site

There should be no doubts about whether a link spam attack on your site will work. Such attacks are rare, but their impact can be huge.

Imagine losing many of your best backlinks overnight. That’ll cause your rankings to drop like a stone.

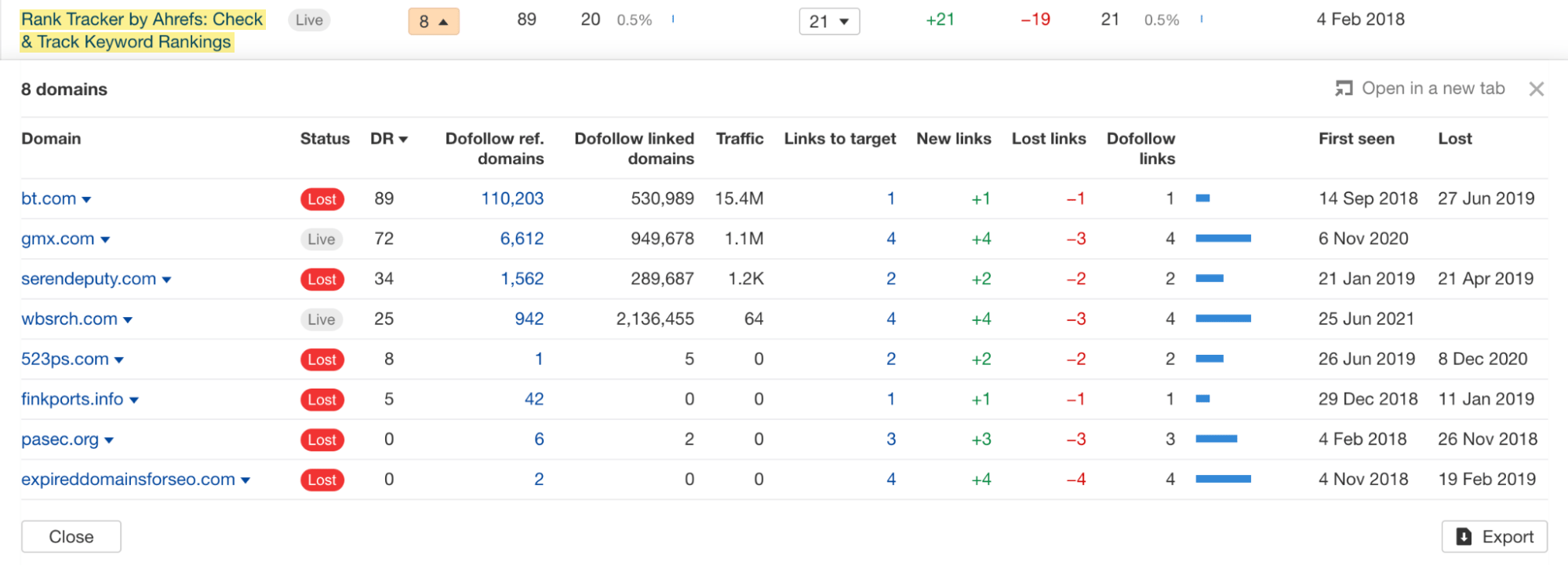

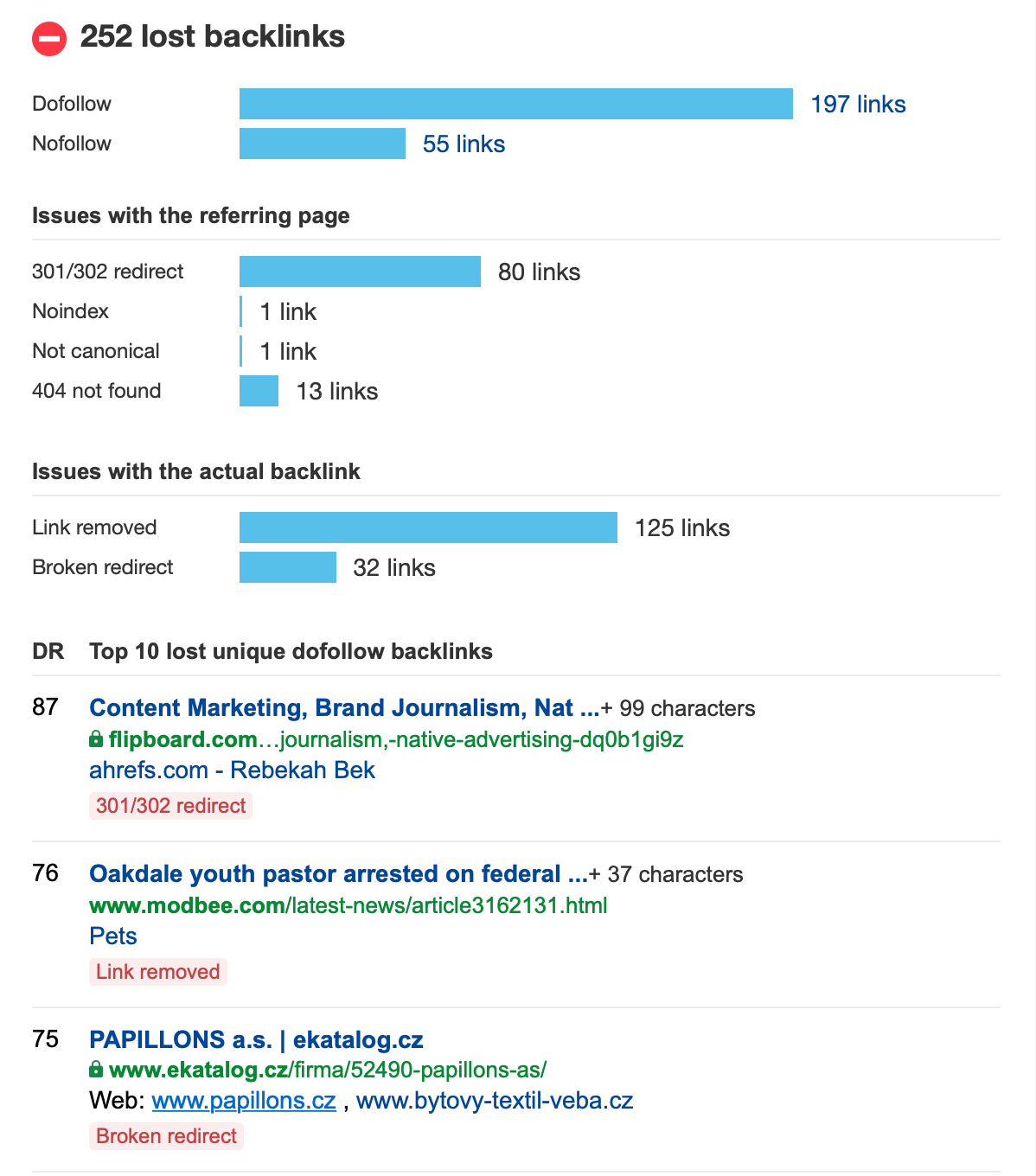

How to detect a link removal attack

There’s no way to stop the fake removal requests from going out—that’s out of your control.

But what you can do is look for signs of an active link removal attack and take action as soon as possible to protect your backlinks.

For this, you can use Ahrefs Alerts.

As well as showing you all new links pointing to your site, Ahrefs’ backlinks alerts can also tell you about lost backlinks.

You will then receive periodic emails alerting you to lost backlinks like this:

If you notice quality backlinks disappearing, you should investigate this further regardless of any negative SEO attack suspicion.

Often, there will be a legitimate reason for the removal.

For example, the page might have been removed, redirected, or the content updated.

But if you can’t see any apparent reason for many lost links, then it could be a sign of a link removal attack. In that case, it’s worth reaching out to the (previously) linking site and asking why your link was removed.

If someone did indeed send a fake link removal request, you’d quickly find out this way. And even if there was a legitimate reason for removing the link, they might consider adding it back.

How to fight back against fake link removal requests

Once you know that a link removal attack is in progress, there are two courses of action:

- If your link has already been removed, reach out to any sites that have already removed your link, let them know that the request did not originate from your company, and ask them to reinstate the link.

- If the site is still linking, keep an even closer eye on your backlink alerts and take appropriate action should you lose any more links.

3. Content scraping

Content scraping is when someone copies your content and posts it verbatim on another site.

Most of the time, it isn’t done with malice. People who scrape your content are usually just trying to get free content. They’re not trying to hurt your site, but it can still happen.

How content scraping attacks can harm your site

Google doesn’t like it when content is duplicated across multiple sites on the web.

They will typically pick one version to rank and ignore the rest.

I should point out here that there’s nothing wrong with syndicating your content on high-authority sites with a link back to your original post.

But when someone copies your content without attribution, that can be bad news.

You would hope that Google would be smart enough to recognize your site as the original source of the content. And most of the time, they do.

But not always.

This is most often the case when your content is scraped and posted on an authoritative website. Sometimes Google sees that authority as meaning the content must have originated from there.

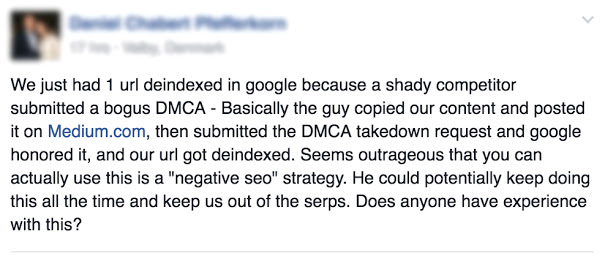

And if the person scraping and republishing your content also does this…

… then you’ve really got a problem.

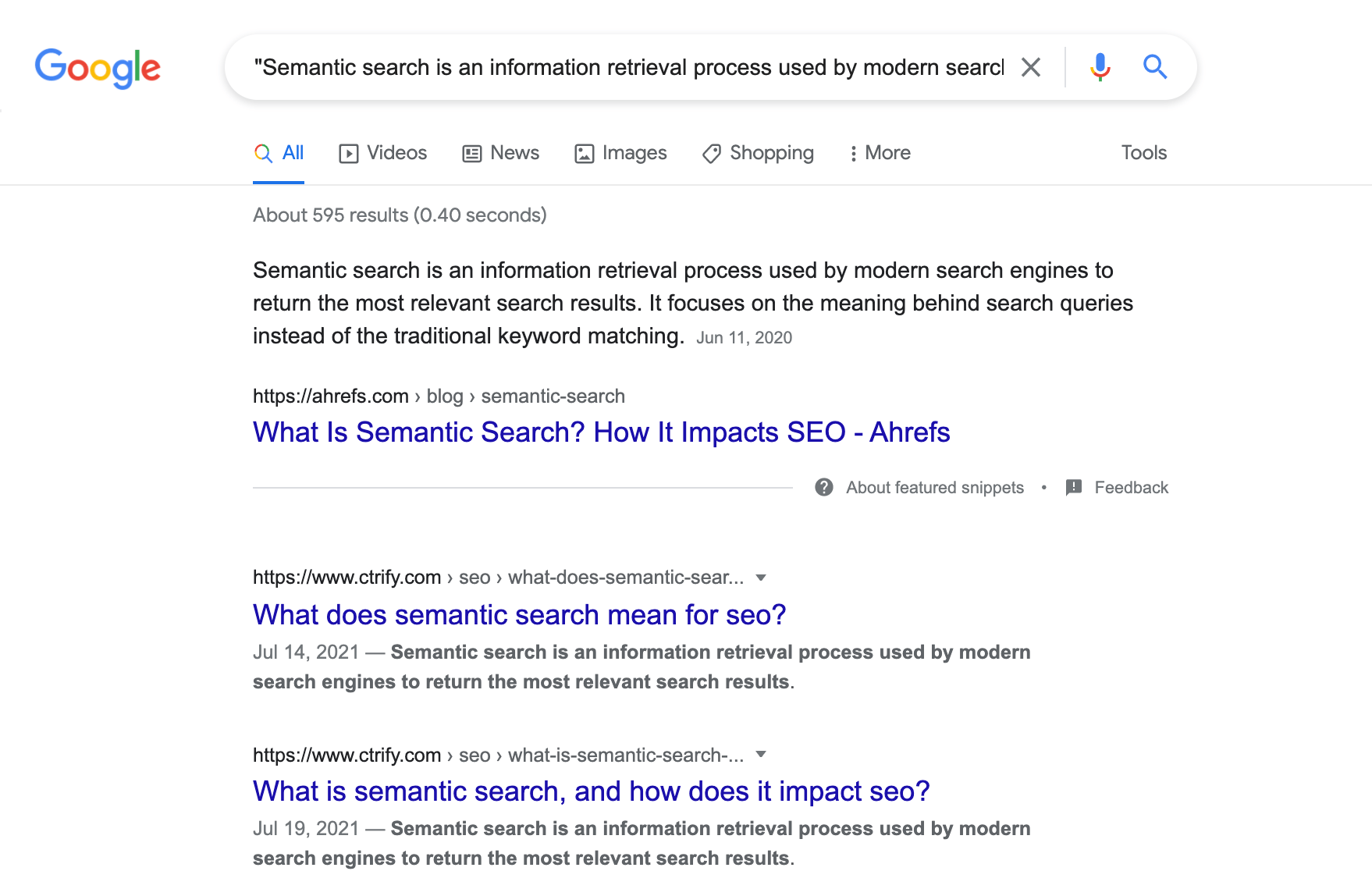

How to detect a content scraping attack

The quickest and easiest way to find out if your content has been scraped is to simply copy a paragraph from your page and paste it into Google (with quotation marks).

Beware that Google only searches for up to 32 words and will ignore anything in the query above that limit.

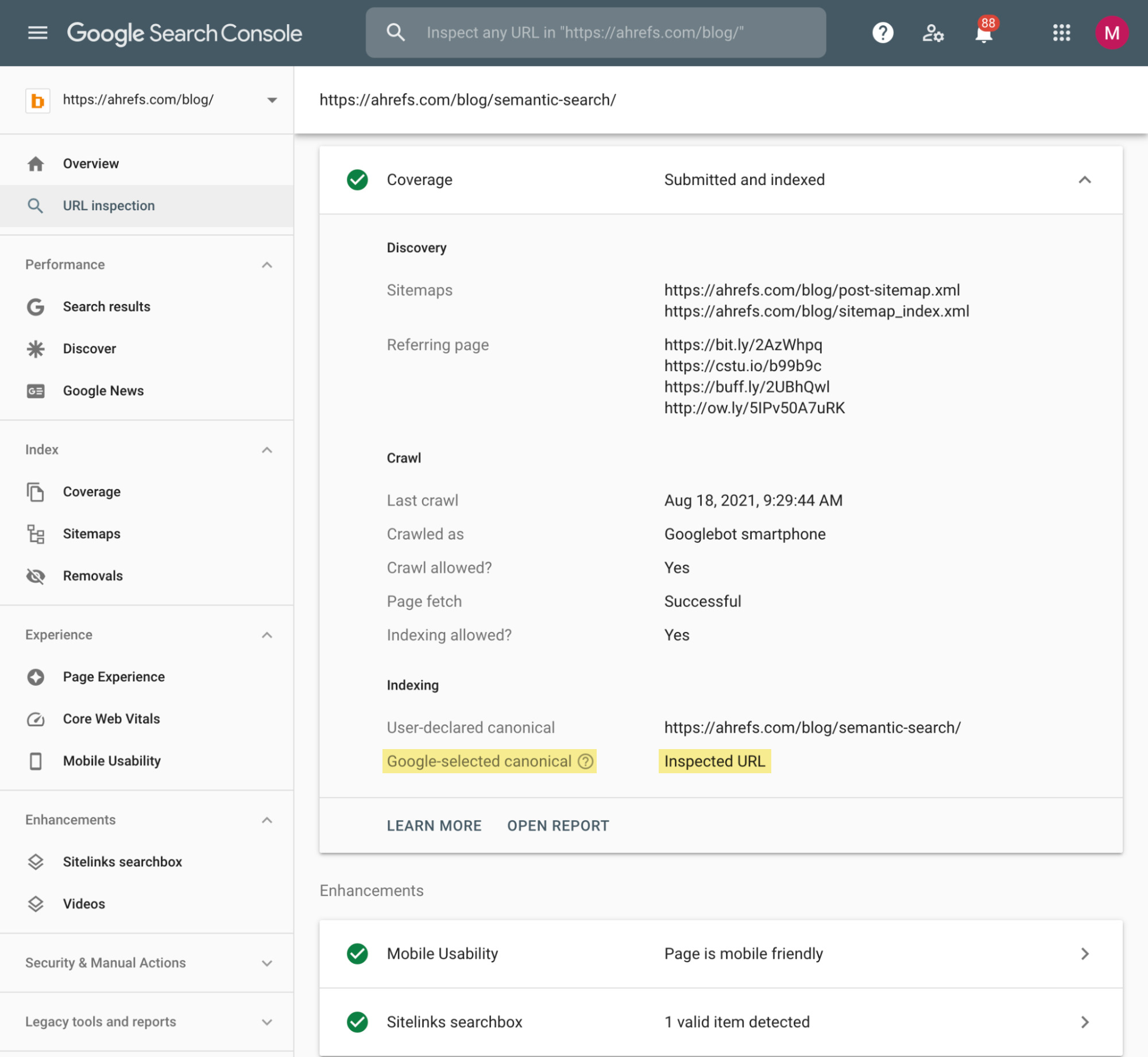

If you suspect that some of your URLs may have been harmed by content scraping, you can always verify their status in Google Search Console. What you’re looking for is something called a “Google-selected canonical.”

You’ll find it after pasting the URL into the GSC address bar under the Coverage section:

You want to see the Inspected URL there. It means that Google considers the inspected URL as the most authoritative version of the content. If you see another internal URL there, you have a duplicate content issue. If you see an external URL there, you’re facing negative SEO.

For multiple pages, that’s going to be a time-consuming task. So instead, you can use tools made explicitly for finding scraped content at scale, such as Copyscape.

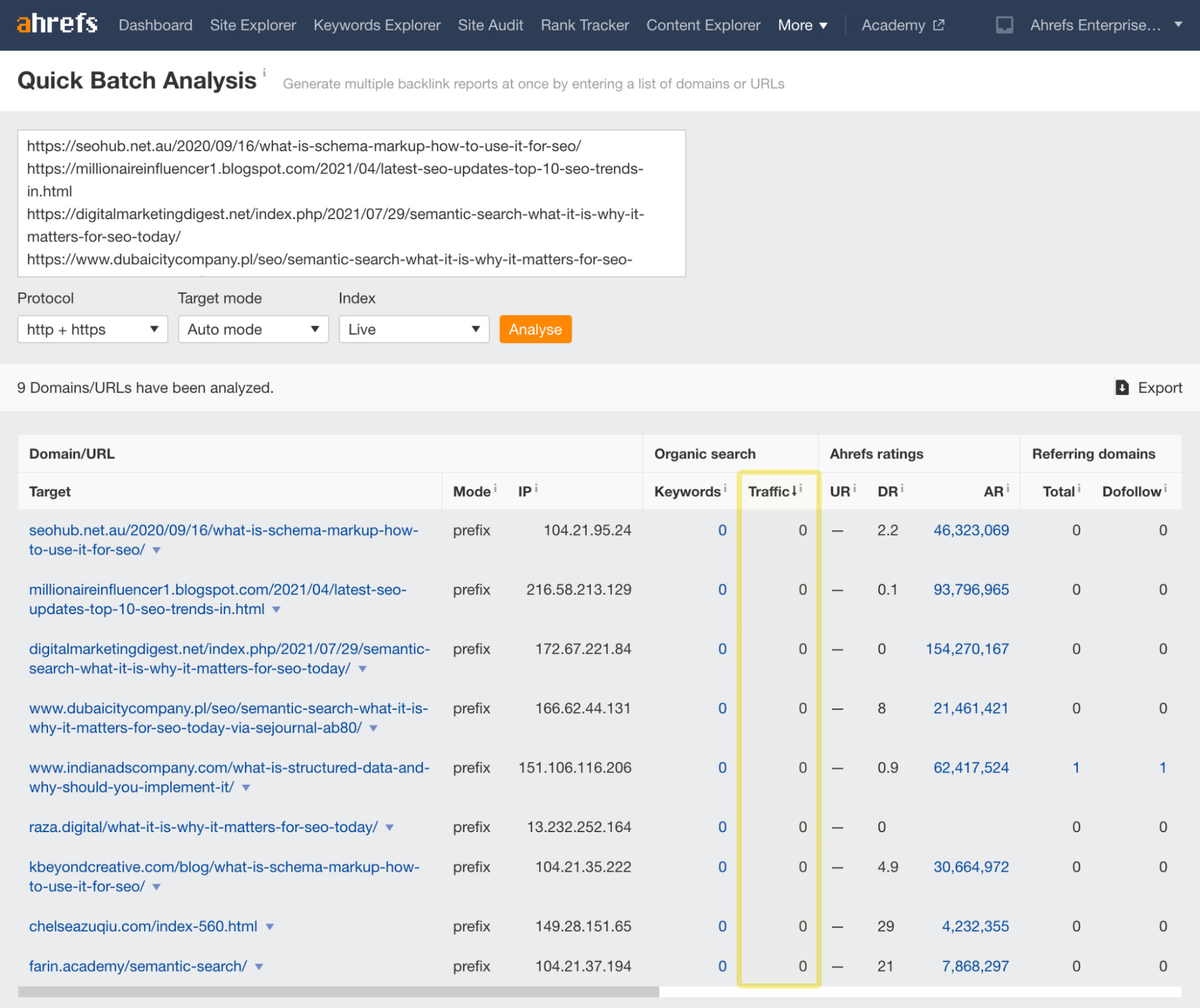

If you have a list of duplicate external URLs, you can then use the Batch Analysis tool and check if any of those URLs receive organic traffic. Sort the URLs by traffic:

If you find any URL that gets organic traffic, then their content scraping was successful.

However, as I’ve already mentioned, there’s a very low chance that this tactic would work.

How to fight back against a content scraping

You only need to “fight back” against content scraping if it’s causing problems.

That said, theft is theft. So if you’re not happy about someone stealing your content, then you can do three things:

1) Ask for an attribution link

This rarely happens, but if the site that has scraped your content is high-quality, and you feel that a link from them may help your rankings, then reach out and ask them to add an attribution link to the scraped post.

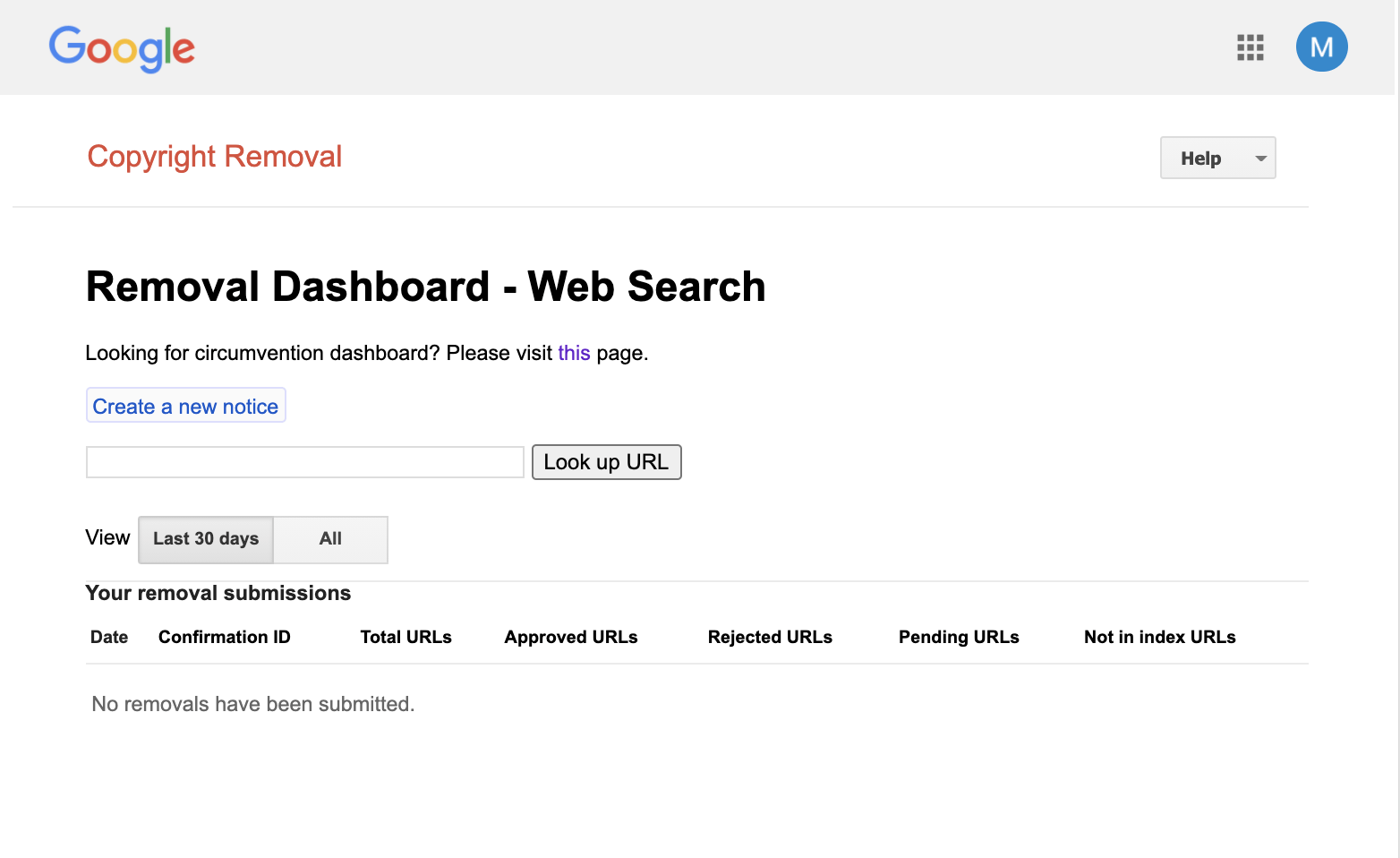

2) File a DMCA complaint

You’ll need to escalate things if the scraped content steals your organic traffic. Just make sure that there is malicious intent behind it with no chance of getting a canonical attribution link before doing this.

To get Google to remove the duplicates, you’ll have to file a DMCA (Digital Millennium Copyright Act) complaint against each page that has copied your content.

You can do that using Google’s DMCA dashboard.

Unfortunately, it’s not a quick process.

If you want to go through it, head to Google’s legal help resource and click through the options describing your problem. Once you get to the “Create request” step, it’s important to provide as much detail as possible to ensure each takedown request is successful.

For more information on the DMCA takedown process, see this guide from The Copyright Alliance.

IMPORTANT

A DMCA removal request should be your last resort in protecting your copyrighted content online. You should only use it when a site blatantly infringes your copyright (without attribution) and will not respond to requests to remove (or attribute) the content.

3) Ensure proper internal linking structure

If the scraped content is identical to the original, it points back to your website with its original internal links. These links won’t bring you any link equity, but they are good at signaling that it’s scraped content.

On the other hand, if they deleted all the links, it should still be easy for search engines to figure out the original because it usually has much better internal and external link profiles. A proper internal linking structure is one of the most important on-page SEO tactics.

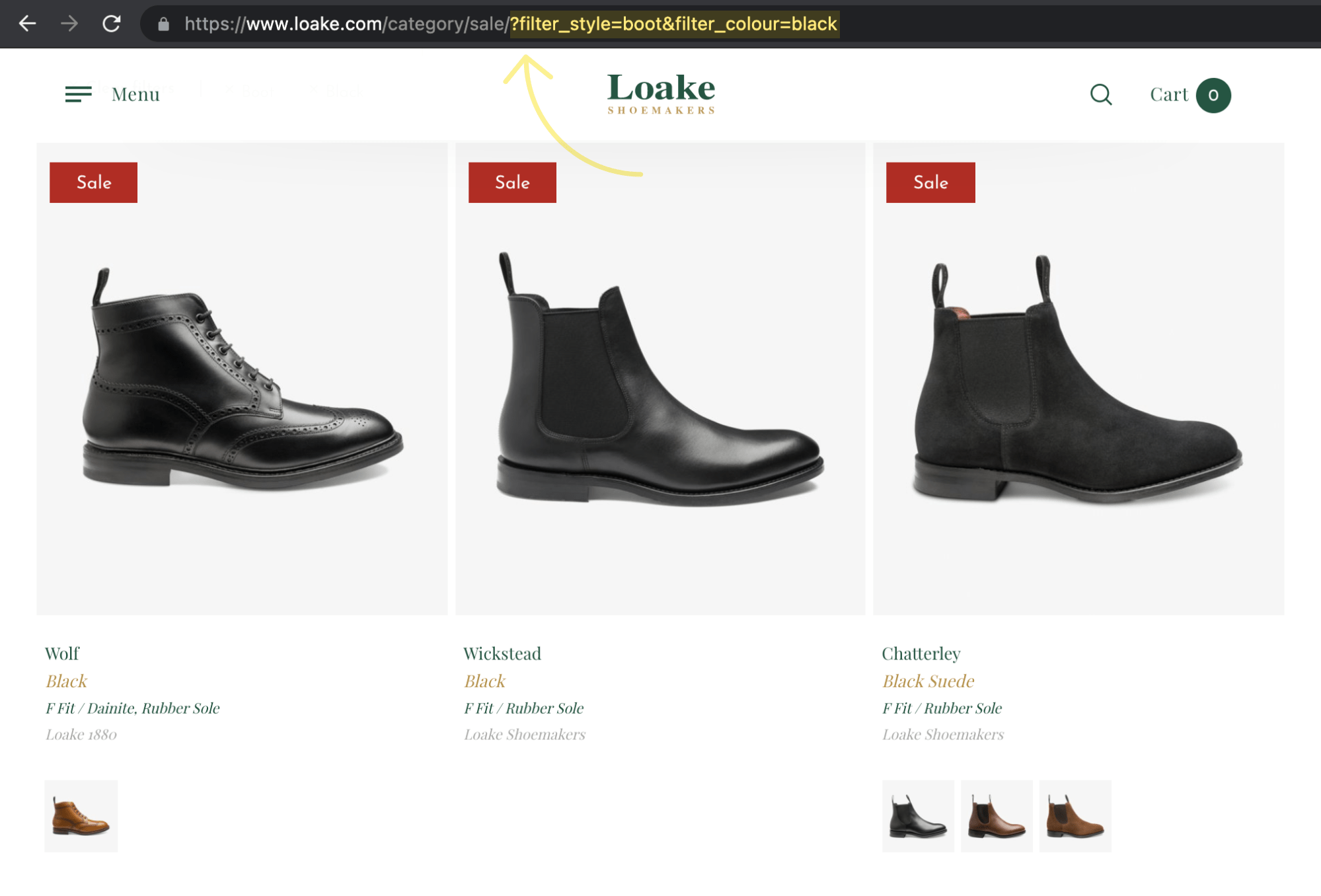

4. False URL parameters

URL parameters are values set in a page’s URL string. In the example below, the parameter ‘size’ is ‘small’:

http://www.example.com/?size=small

These parameters are commonly used in ecommerce (and other) systems to filter and sort pages.

How false URL parameters can harm your site

URL parameters can cause all sorts of indexing issues if your site is not well configured.

One page can end up getting indexed multiple times, with only slight variations in content.

The shady SEO can use this to their advantage.

How? By linking to pages on your site using fake parameters.

Google can follow these links and—if the site isn’t set up correctly—index the pages.

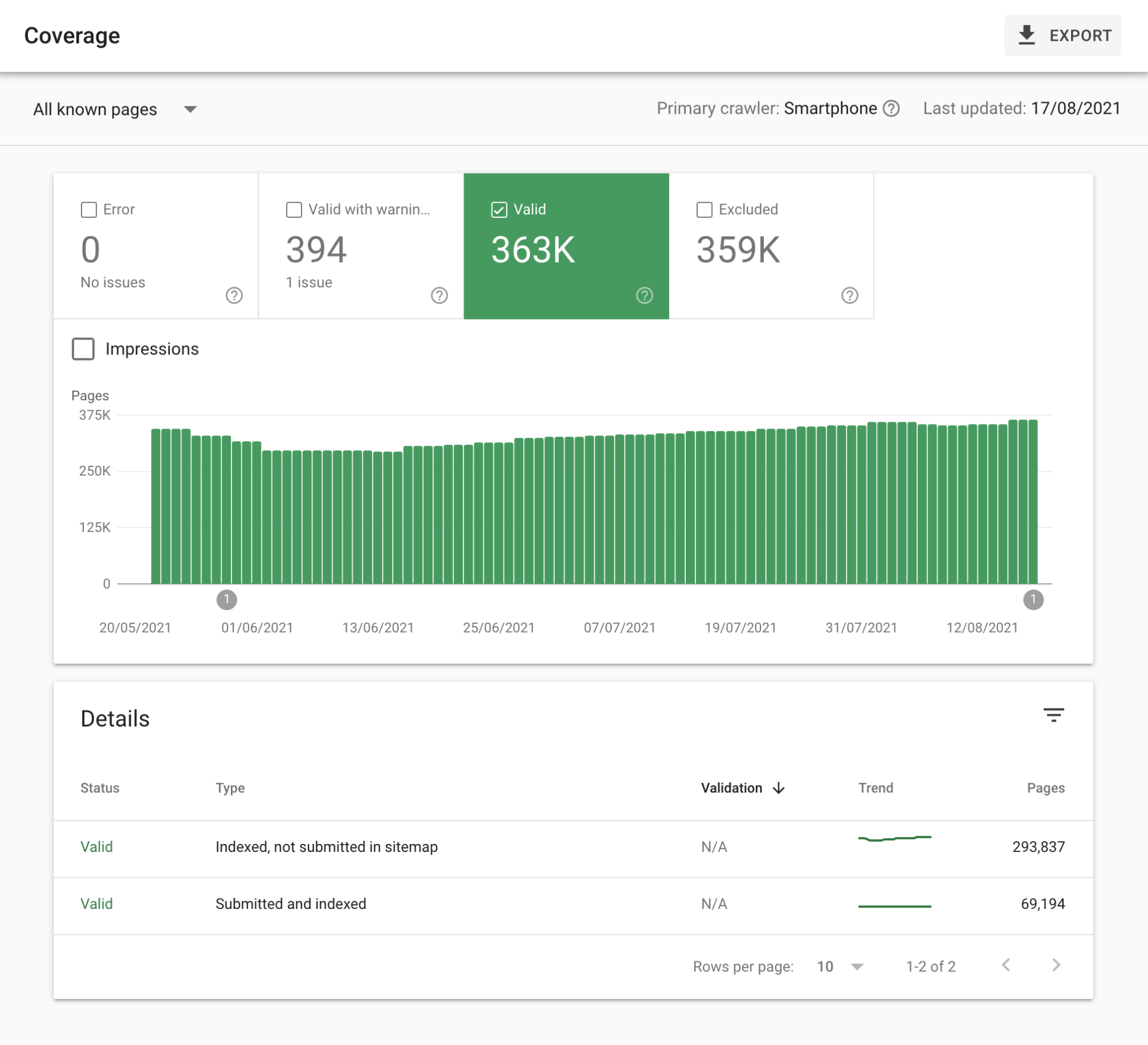

How to detect a false parameter attack

Perhaps the easiest way to spot this kind of attack is to check the Coverage report in Google Search Console.

If you see a significant spike in indexed pages, then it could indicate an attack.

How to fight back against false parameter attacks

The best way to “fight back” against such attacks is to take preventive measures in the first place.

Fortunately, this is easy to do.

I’ve added a fake parameter to the URL of our SEO tips post.

The page loads at that URL.

But…

We have the self-referencing canonical tag in place that lets Google know what the de-facto version of this page is.

<link rel="canonical" href="https://ahrefs.com/blog/seo-tips/" />

This tells them they should only index the root URL and ignore any additional parameters.

In most cases, deploying these self-referencing canonicals should be enough to prevent this kind of SEO attack.

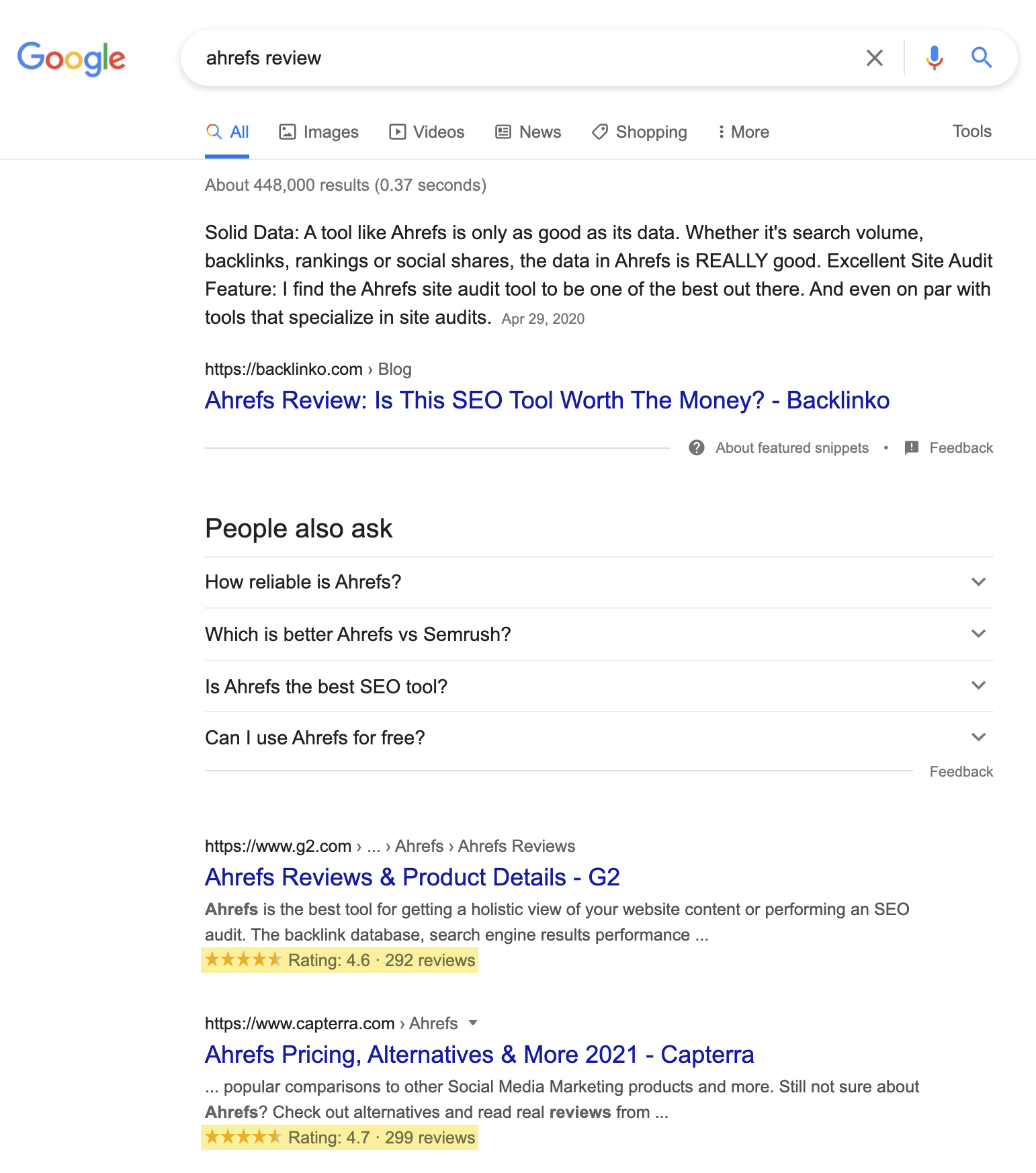

5. Fake reviews

You probably check online reviews when you’re about to visit a restaurant or buy stuff online. Google’s rich snippets can directly show ratings and review metrics in the SERP, which help catch attention and make an impression on people—positive or negative.

How fake reviews can harm your site

Imagine that users see bad review ratings for your business in the SERP. You don’t want this kind of influence on their buying process.

If you are in SaaS or any other B2B industry, fortunately, the most famous review platforms like G2 or Capterra have review authenticity processes in place. Your review won’t be published until it’s approved. So it would be hard to leverage such platforms for a negative SEO attack.

If you’re a local business, like a restaurant, people research you on Google My Business, Yelp, TripAdvisor, and other local review services. It’s much easier to manipulate these, but it’s in their best interest to keep the reviews as objective and neutral as possible.

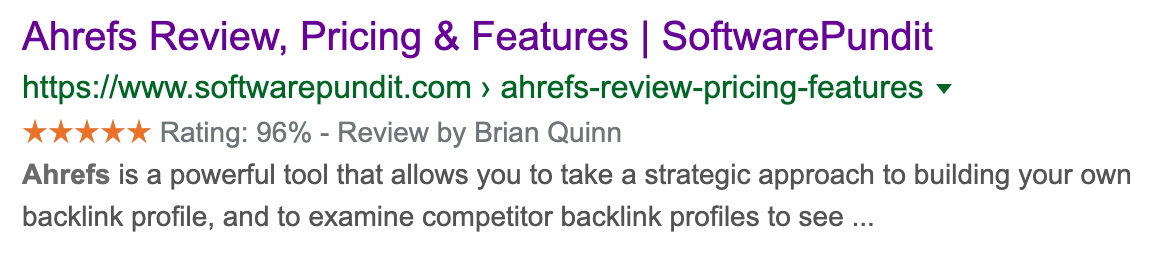

However, review platforms are not the only options here. Google can also display review rich results for editorial reviews.

Anyone can publish a bad review of your product or service, and it may rank well in SERP. It can also be seen as a rich result if the schema markup is set up correctly.

How to detect fake reviews

Keep an eye on what appears in the SERPs for your brand reviews. Any monitoring would be overkill here; just run the search once a month and see for yourself. If you want to make sure you’re also covering local SERPs, search from more locations.

How to fight back against fake reviews

If you’re dealing with fake reviews on review platforms, report them. Don’t expect the platforms to take them down right away. It can be a rather slow process. If the problem is urgent, trying to get in touch with someone from the review platform will be your best bet.

Sidenote.

Always make sure that those reviews are fake. Don’t try to report and take down genuine negative reviews. Deal with them, say sorry, offer compensation. Adopt review response processes.

You can also fight back by encouraging more of your clients to leave reviews. Again, keep this genuine. Prompting promoters of your service is fine; buying your clients off in exchange for a positive review is not.

Testimonials and reviews are powerful weapons. The more of them you have, the more difficult it is to be influenced by fake reviews. Be responsive, emphasize the undeniably genuine ones, and you’ll be fine.

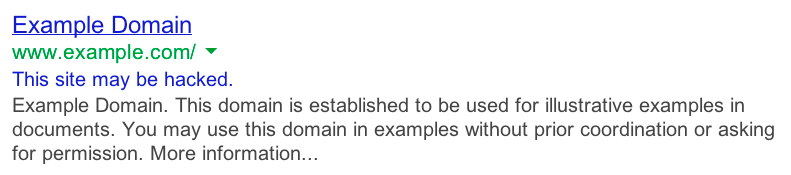

6. Hacking your site

Hacking and cyber-attacks are forms of negative SEO that cross the line into criminality.

How hacking can harm your site

Google wants to protect its users and takes a dim view of any site hosting malware (or linking to sites that do).

If they don’t bowl it straight out of the SERPs, they will add a ‘this site may be hacked’ flag to any results for the site, as Google shows here:

I’m sure you wouldn’t click on a result like that. So if your site gets flagged as hacked, expect to see your rankings tank.

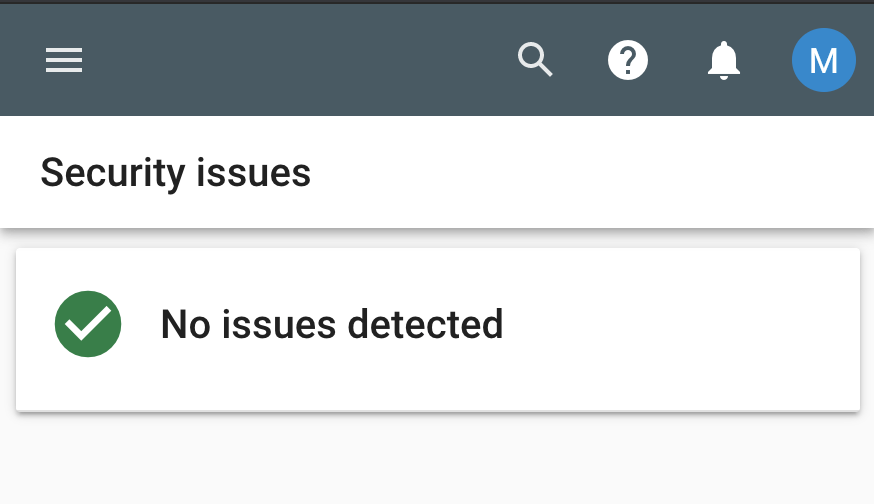

How to detect a website hack

Of every negative SEO attack on our list, this is often the easiest one to detect.

Hacking usually wreaks havoc on your website. You can’t miss it.

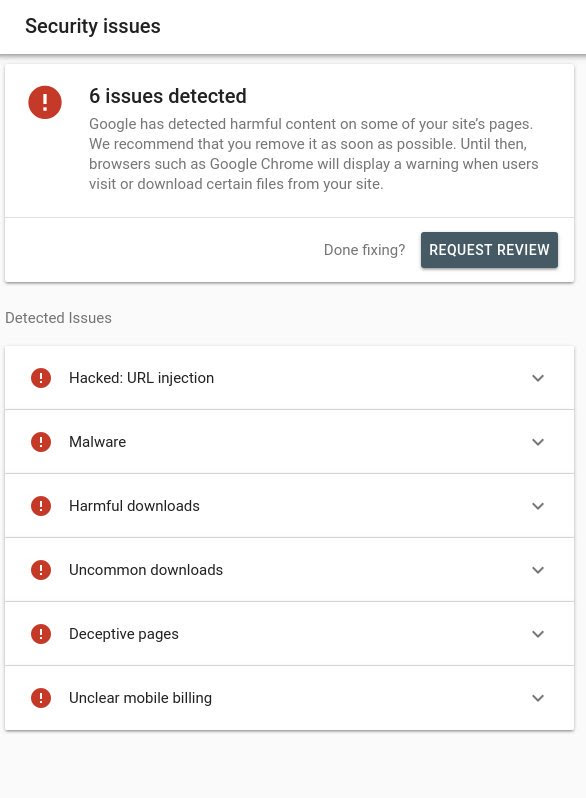

But if you feel like you might have, head over to the “Security issues” tab in Google Search Console. What you want to see is a screen like this:

Not this:

How to fight back against website hacks

Prevention is the key. The strength of your website depends on your server settings and security.

If you’re unfortunate enough to have already been hacked, then I’m sorry to say that you probably have a big task on your hands.

So prevention is the best cure here.

Now, I’m not going to attempt to explain everything you should be doing to secure your site against hacking here. That’s a post in its own right.

But I will share a few tips to get you off the ground:

- Install a security plugin. Check this plugin selection for WordPress.

- Use strong passwords. Sorry, but “password1” isn’t going to cut it for your WordPress login. Using a strong password will help prevent brute-force attacks.

- Keep your CMS (and plugins) up to date. Enabling automatic updates is your best bet.

That’s a very basic overview, mind. So here are some of the best website security tutorials on the web:

pro tip

If you have the budget, I would also recommend securing your site with services such as Sucuri.

Install the software on your site, and Sucuri will actively monitor for hacks, changes, brute-force login attempts, and more.

You’ll get an alert if anything looks untoward. Even better, if you do get hacked, they will clean up your site for you as part of the service.

7. DDoS attacks

This may also count as hacking, but instead of messing up your website, DDoS attacks aim to shut it down completely. DDoS stands for distributed denial-of-service, a malicious attempt to prevent legitimate requests and traffic from reaching your website by flooding your server or its surrounding infrastructure until its resources are exhausted.

How DDoS attacks can harm your site

Generally speaking, your server and, therefore, your website won’t work unless you have services capable of preventing and mitigating DDoS attacks.

Having your website or a few pages unavailable due to server maintenance is fine. Google considers the 503 Service Unavailable error as a temporary thing. However, if this lasts for a more extended period, it may cause deindexation.

A sneakier form of a DDoS attack might be one that doesn’t shut your site down entirely but instead slows it down. Not only would it make the user experience worse, but there is a chance that it would harm your ranking as well since page speed and related Core Web Vitals are ranking factors.

How to detect a DDoS attack

Make sure you or your engineering team monitor incoming traffic and requests. It helps detect the sneakier DDoS attacks, but the huge ones can shut down your website within a few seconds.

The largest recorded attack reached 2.54 Tbps. That’s a bandwidth ballpark comparable to some video and streaming platforms.

How to fight back against DDoS attacks

This is something that you or your team can’t take care of directly in the vast majority of cases.

Your best bet is to use CDNs, dedicated servers, and other services with huge network infrastructures that often have their own DDoS protection solutions. These services also usually offer load balancing and origin shielding for the best possible protection against traffic and request spikes on your web hosting server.

Final thoughts

I’ve listed the most common types of negative SEO attacks. This list is not exhaustive, but it should reflect the most negative SEO use cases you can encounter.

As said multiple times throughout the post, negative SEO is very unlikely to work these days. However, it’s good to be vigilant, so keep a close eye on your website, its traffic, and backlinks.

If you have any experience with negative SEO, questions, or comments, ping me on Twitter.

Source: ahrefs.com, originally published on 2021-08-24 06:00:21

Connect with B2 Web Studios

Get B2 news, tips and the latest trends on web, mobile and digital marketing

- Appleton/Green Bay (HQ): (920) 358-0305

- Las Vegas, NV (Satellite): (702) 659-7809

- Email Us: [email protected]

© Copyright 2002 – 2022 B2 Web Studios, a division of B2 Computing LLC. All rights reserved. All logos trademarks of their respective owners. Privacy Policy

![How to Successfully Use Social Media: A Small Business Guide for Beginners [Infographic]](https://b2webstudios.com/storage/2023/02/How-to-Successfully-Use-Social-Media-A-Small-Business-Guide-85x70.jpg)

![How to Successfully Use Social Media: A Small Business Guide for Beginners [Infographic]](https://b2webstudios.com/storage/2023/02/How-to-Successfully-Use-Social-Media-A-Small-Business-Guide-300x169.jpg)

Recent Comments